Black Friday Cyber Monday (BFCM) 2024 proved to be a monumental event for Shopify. The platform efficiently processed 57.3 petabytes of data, managed 10.5 trillion database queries, and achieved a peak of 284 million requests per minute on its edge network. App servers independently handled 80 million requests per minute, concurrently pushing 12 terabytes of data every minute during Black Friday.

Significantly, this traffic volume now represents Shopify’s operational baseline. BFCM 2025 surpassed these figures, serving 90 petabytes of data, managing 14.8 trillion database queries and 1.75 trillion database writes, with a peak performance reaching 489 million requests per minute. This substantial growth prompted Shopify to re-engineer its entire Shopify BFCM readiness program.

The comprehensive preparation for this scale involved thousands of engineers dedicated for nine months, conducting five significant Black Friday Cyber Monday scale testing initiatives.

This article examines how Shopify engineered its platform for success during the critical commerce period.

Shopify’s BFCM preparation commenced in March, centered on a multi-region strategy leveraging Google Cloud regions.

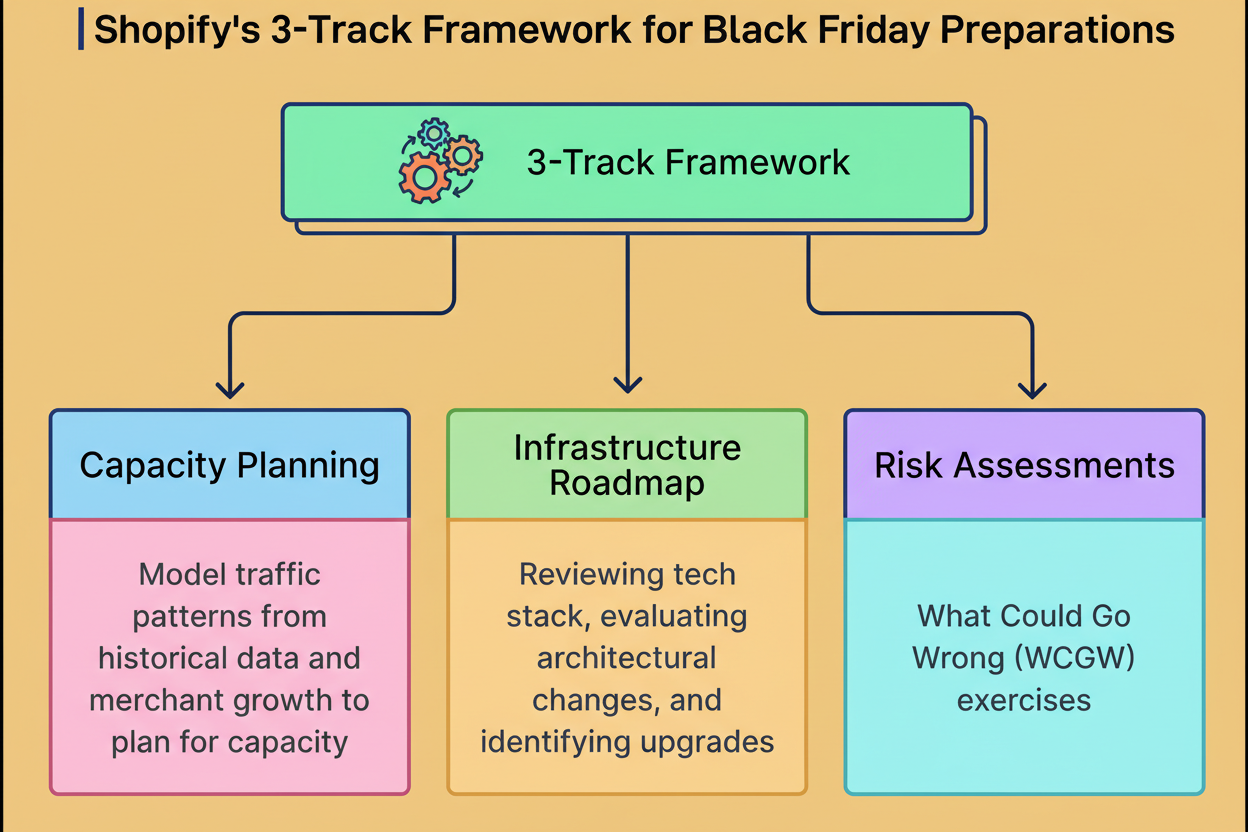

The engineering team structured its efforts into three concurrent and interdependent tracks:

Capacity Planning encompasses modeling traffic patterns by utilizing historical data and merchant growth projections. These estimates are submitted to cloud providers proactively, ensuring adequate physical infrastructure availability. This planning process precisely determines Shopify’s computing power requirements and optimal geographical distribution.

The Infrastructure Roadmap involves a thorough review of the technology stack, evaluation of necessary architectural modifications, and identification of system upgrades to achieve target capacity. This track facilitates the sequencing of all forthcoming work. Crucially, Shopify avoids utilizing BFCM as a release deadline, with all architectural changes and migrations completed months prior to the critical period.

Risk Assessments utilize “What Could Go Wrong” exercises to document potential failure scenarios. The team establishes escalation priorities and generates inputs for their “Game Days.” This strategic intelligence aids in proactively testing and fortifying systems.

These three tracks demonstrate continuous interdependency. For instance, risk findings may uncover previously unaddressed capacity planning gaps. Similarly, infrastructure modifications might introduce new risks requiring assessment, forming a perpetual feedback loop.

To ensure proper risk assessment, the Shopify engineering team conducts Game Days. These initiatives constitute chaos engineering exercises, specifically designed to simulate production failures at the BFCM scale.

The team initiated these Game Days in early spring. This process involves the deliberate injection of faults into systems to evaluate their responses under various failure conditions, akin to a software-centric fire drill.

During these Game Days, the engineering team allocates particular attention to “critical journeys.” These represent the most business-critical pathways within their platform, including checkout, payment processing, order creation, and fulfillment. Failure in these areas during BFCM would result in immediate sales losses for merchants.

Critical Journey Game Days involve executing cross-system disaster simulations. Common aspects tested include:

These exercises cultivate critical muscle memory for incident response, concurrently exposing deficiencies within operational playbooks and monitoring tools.

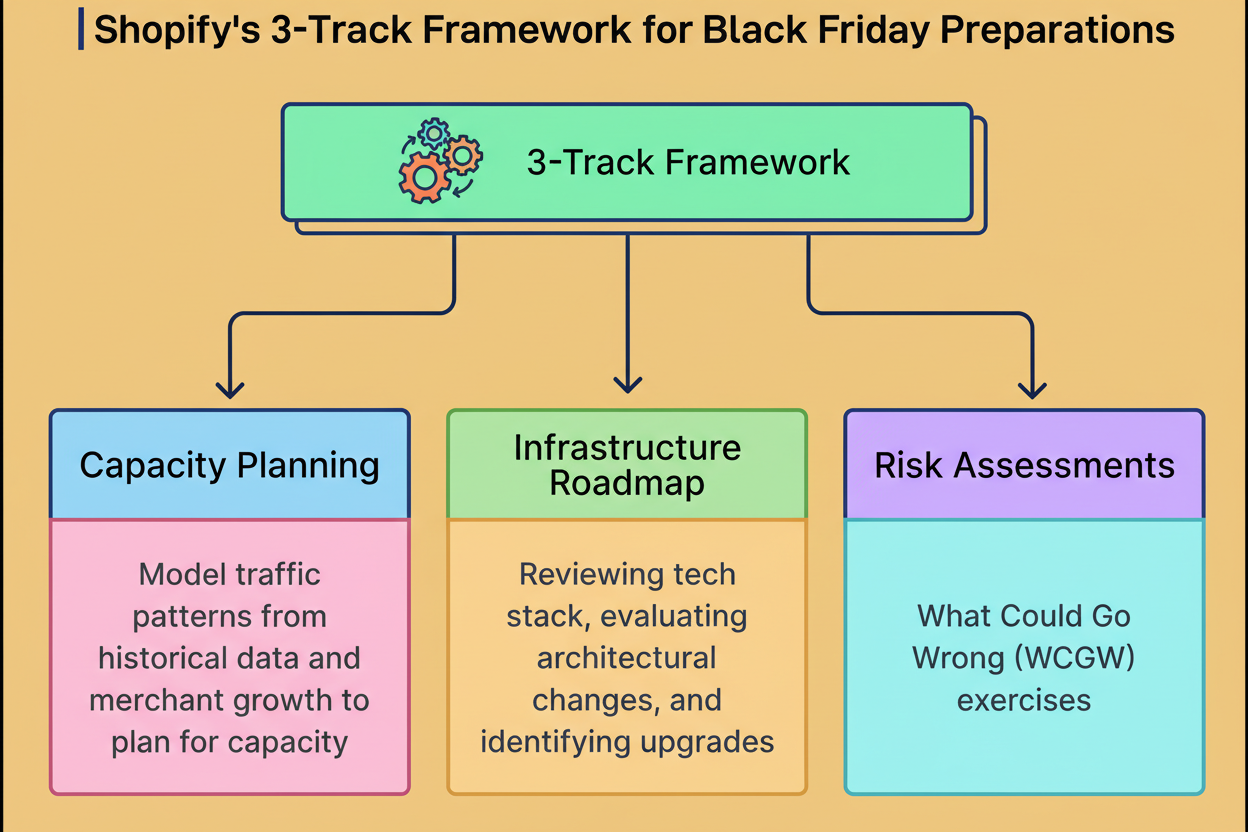

Crucially, Shopify addresses these identified gaps significantly in advance of BFCM, rather than discovering them during periods of peak merchant demand. All findings derived from Game Days are integrated into Shopify’s Resiliency Matrix, a centralized documentation system that tracks vulnerabilities, incident response procedures, and corrective actions across the entire platform.

The Resiliency Matrix comprises five essential components:

The Matrix thus functions as the foundational roadmap for system hardening preceding BFCM. Teams continuously update it throughout the year, meticulously documenting resilience improvements as they are implemented.

While Game Days focus on isolated component testing, Shopify also requires validation of the entire platform’s capability to manage BFCM volumes. This is where comprehensive load testing becomes indispensable.

The engineering team developed a tool named Genghis, which executes scripted workflows that emulate authentic user behavior. It simulates actions such as browsing, adding items to the cart, and completing checkout flows. This tool progressively increases traffic until system breakpoints are reached, effectively identifying actual capacity limits.

Tests are conducted on production infrastructure concurrently from three distinct Google Cloud regions: us-central, us-east, and europe-west4. This configuration accurately simulates global traffic patterns. Genghis further injects simulated flash sale bursts atop baseline load to rigorously test peak capacity scenarios.

Shopify integrates Genghis with Toxiproxy, an open-source framework developed in-house for simulating various network conditions. Toxiproxy introduces network failures and partitions, intentionally hindering inter-service communication. A network partition, in this context, refers to a situation where two segments of a system lose their ability to communicate, even while both remain operational.

During these tests, teams continuously monitor dashboards in real time, poised to abort if system degradation becomes apparent. Multiple teams collaborate to identify and resolve emerging bottlenecks.

When load testing uncovers capacity limitations, teams have three primary courses of action:

These critical decisions establish the definitive BFCM capacity and guide optimization efforts across Shopify’s entire technology stack. A crucial understanding for the team is the imperative to discover capacity limits well before BFCM, as scaling infrastructure and optimizing code necessitates months of dedicated preparation.

BFCM rigorously tests every system at Shopify; however, 2025 introduced a singular challenge. A segment of their infrastructure lacked prior exposure to holiday traffic, posing a unique problem: how does one prepare for peak load when you have no historical data for modeling?

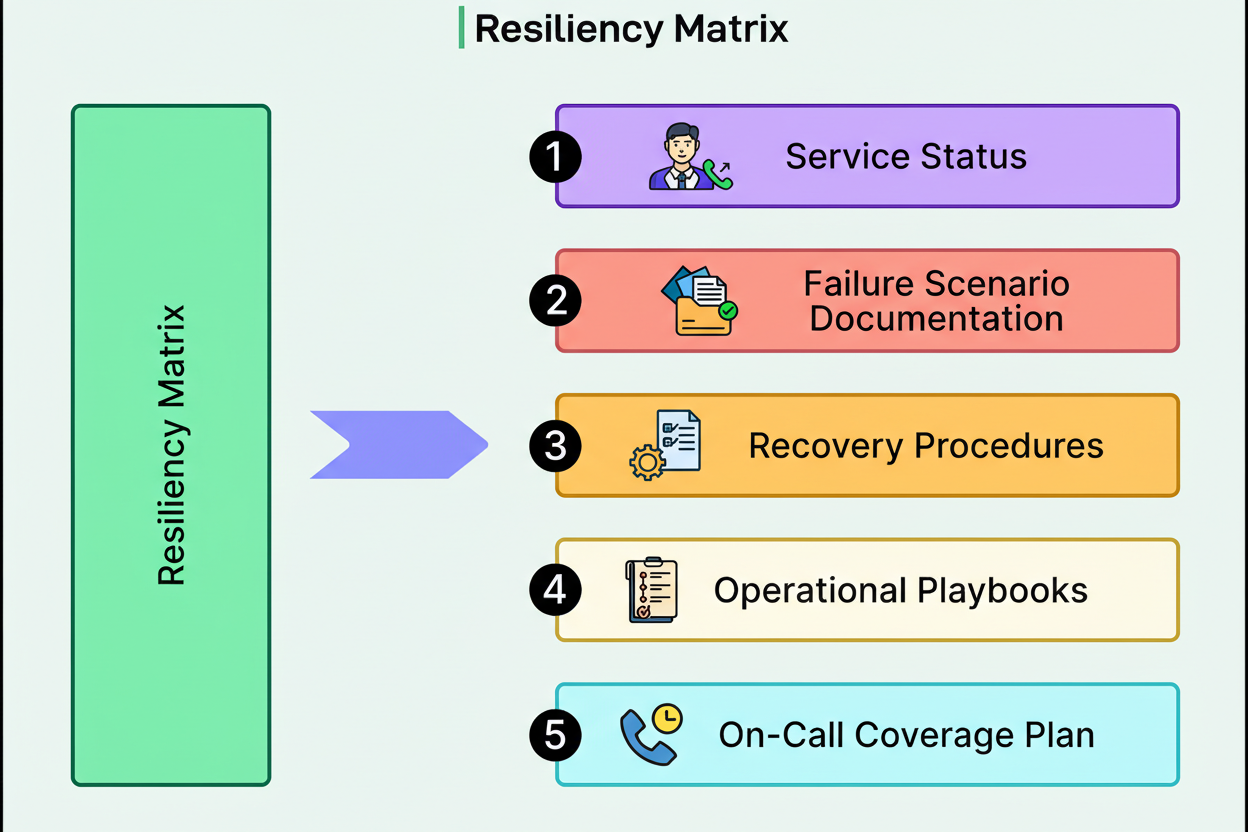

In 2024, Shopify’s engineering team undertook a complete reconstruction of its analytics platform. They developed new ETL pipelines, where ETL signifies Extract, Transform, Load—the process of extracting data from diverse sources, processing it, and storing it in a usable format. Concurrently, they transitioned the persistence layer, replacing their legacy system with entirely new APIs.

This transition created an asymmetry. While the ETL pipelines operated throughout BFCM 2024, yielding a full season of production data illustrating their performance under holiday load, the API layer was launched subsequent to the peak season. Consequently, preparation for BFCM involved APIs that had no prior experience with holiday traffic volumes.

This situation holds significant importance because, during BFCM, merchants meticulously monitor their analytics. They require real-time sales figures, conversion rates, traffic patterns, and insights into popular products. Every such query directly accesses the API layer. Should these APIs fail to manage the load, merchants would lose critical visibility during their most crucial sales period.

Shopify executed specific Game Days for the analytics infrastructure. These were carefully controlled experiments designed to uncover failure modes and bottlenecks. The team simulated elevated traffic loads, introduced database latency, and tested cache failures to systematically map the system’s behavior under stress.

The results identified four critical issues necessitating resolution:

Beyond performance enhancements, the team validated alerting mechanisms and documented comprehensive response procedures. Their teams received training and were meticulously prepared to manage potential failures during the actual event.

While Game Days and load testing focus on preparing individual components, scale testing offers a distinct approach. It validates the entire platform’s integrated functionality at BFCM volumes, revealing issues that only manifest when all systems operate at simultaneous full capacity.

From April through October, Shopify conducted five extensive scale tests at their forecasted traffic levels, specifically targeting their peak p90 traffic assumptions. In statistical terms, p90 denotes the 90th percentile, representing the traffic level that 90% of requests will not exceed.

Details of these scale tests include:

By the fourth test, Shopify reached 146 million requests per minute and exceeded 80,000 checkouts per minute. The final test of the year evaluated their p99 scenario, which achieved 200 million requests per minute.

These tests are exceptionally large-scale, necessitating that Shopify conducts them at night and coordinates with YouTube due to their impact on shared cloud infrastructure. The team prioritized testing resilience, not merely raw load capacity. They executed regional failovers, evacuating traffic from core US and EU regions to confirm the efficacy of their disaster recovery procedures.

Shopify implemented four distinct types of tests:

The team simulated authentic user behaviors, including storefront browsing and checkout, admin API traffic originating from applications and integrations, analytics and reporting loads, and backend webhook processing. Furthermore, critical scenarios such as sustained peak load, regional failover, and cascading failures—where multiple systems experience simultaneous failure—were tested.

Each test cycle identified issues that would remain undetectable under steady-state load, and the team systematically rectified each problem as it surfaced. Key issues included:

Mid-program, Shopify implemented a significant adjustment by incorporating authenticated checkout flows into their test scenarios. Modeling transactions from genuine logged-in buyers exposed rate-limiting code paths untouched by anonymous browsing. Despite authenticated flows constituting a minor percentage of overall traffic, they unveiled bottlenecks that would have posed significant challenges during the actual event.

While BFCM preparation diligently prepares Shopify, operational excellence is paramount in maintaining stability when traffic surges occur.

The operational plan orchestrates engineering teams, incident response protocols, and real-time system tuning. The key components of this plan include:

Following the conclusion of BFCM, a comprehensive post-mortem process correlates monitoring data with actual merchant outcomes to ascertain successful strategies and identify areas requiring enhancement.

The underlying philosophy is straightforward: diligent preparation ensures readiness, while operational excellence guarantees stability.

Shopify’s 2025 BFCM readiness program exemplifies systematic preparation at an immense scale. Thousands of engineers collaborated for nine months, conducting five major scale tests that pushed their infrastructure to 150% of the anticipated load. They successfully executed regional failovers, performed chaos engineering exercises, meticulously documented system vulnerabilities, and fortified systems with updated runbooks well in advance of merchant requirements.

Distinguishing this from typical pre-launch preparations is its systematic methodology. Most organizations conduct load tests once or twice, address critical bugs, and then hope for optimal performance. Shopify, conversely, dedicated nine months to continuous testing, identifying breaking points, resolving issues, and rigorously validating the efficacy of those fixes.

Furthermore, the tools developed by Shopify are not ephemeral BFCM scaffolding. The Resiliency Matrix, Critical Journey Game Days, and real-time adaptive forecasting have evolved into permanent infrastructure enhancements. These advancements contribute to Shopify’s ongoing resilience, extending beyond mere peak season demands.

To visually represent BFCM activity, Shopify also unveiled an engaging pinball game to highlight the Shopify Live Globe. The game itself operates at 120 frames per second within a browser, featuring a complete 3D environment, a physics engine, and VR support. Technically, the game is a three[dot]js application constructed with “react-three-fiber”. Every merchant sale appears on this globe within a few seconds. Individuals can explore the game and its visualization on the homepage for Shopify Live Globe.

References: