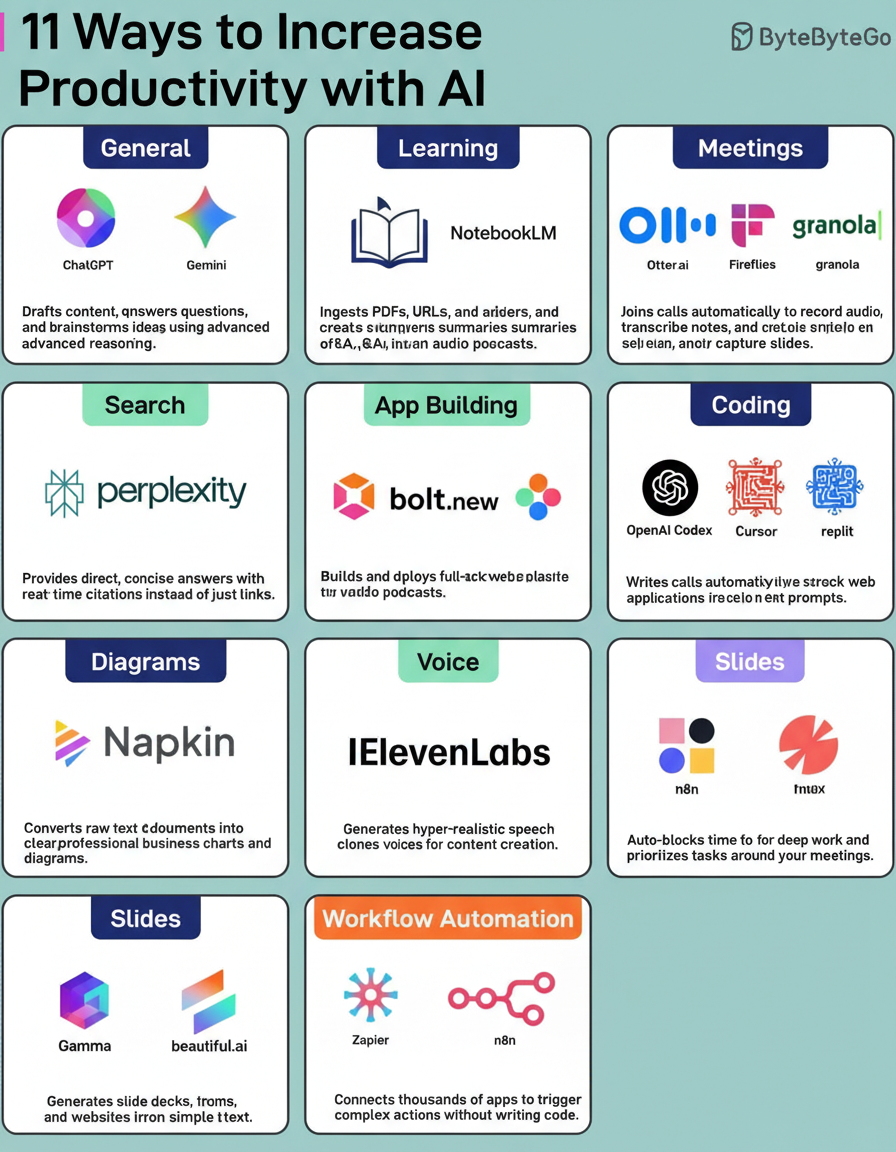

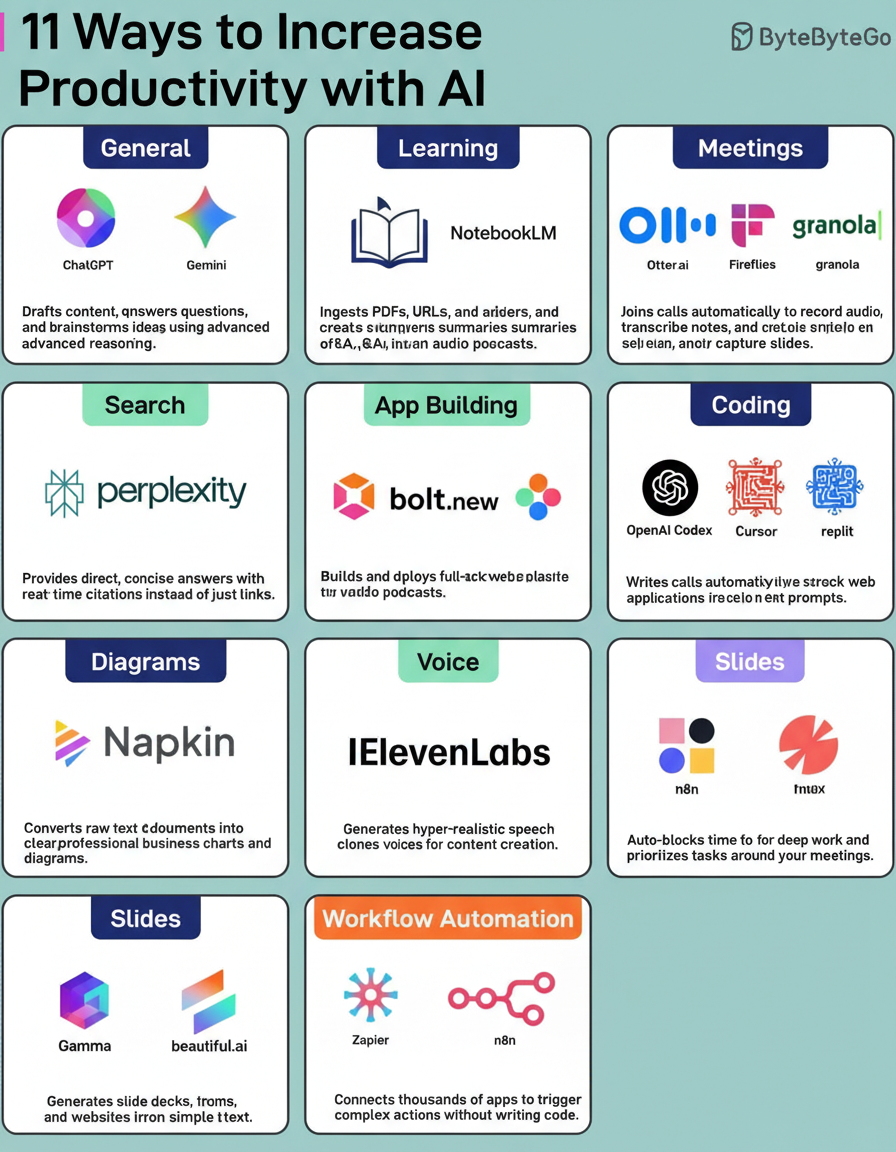

Artificial intelligence is transforming the way tasks are executed, allowing for greater output in less time without requiring coding skills. Understanding which AI tools to utilize and when is essential for maximizing productivity.

For instance, instead of reading through extensive technical blogs, uploading them to Google’s NotebookLM enables quick summarization of key takeaways. Additionally, Otter.ai converts meeting transcripts into actionable items, decisions, and highlights.

A collection of 19 productivity tools caters to various workflow areas, designed to streamline daily processes efficiently. These resources are valuable for overcoming obstacles when initiating tasks.

Consider sharing insights on lesser-known yet valuable AI tools.

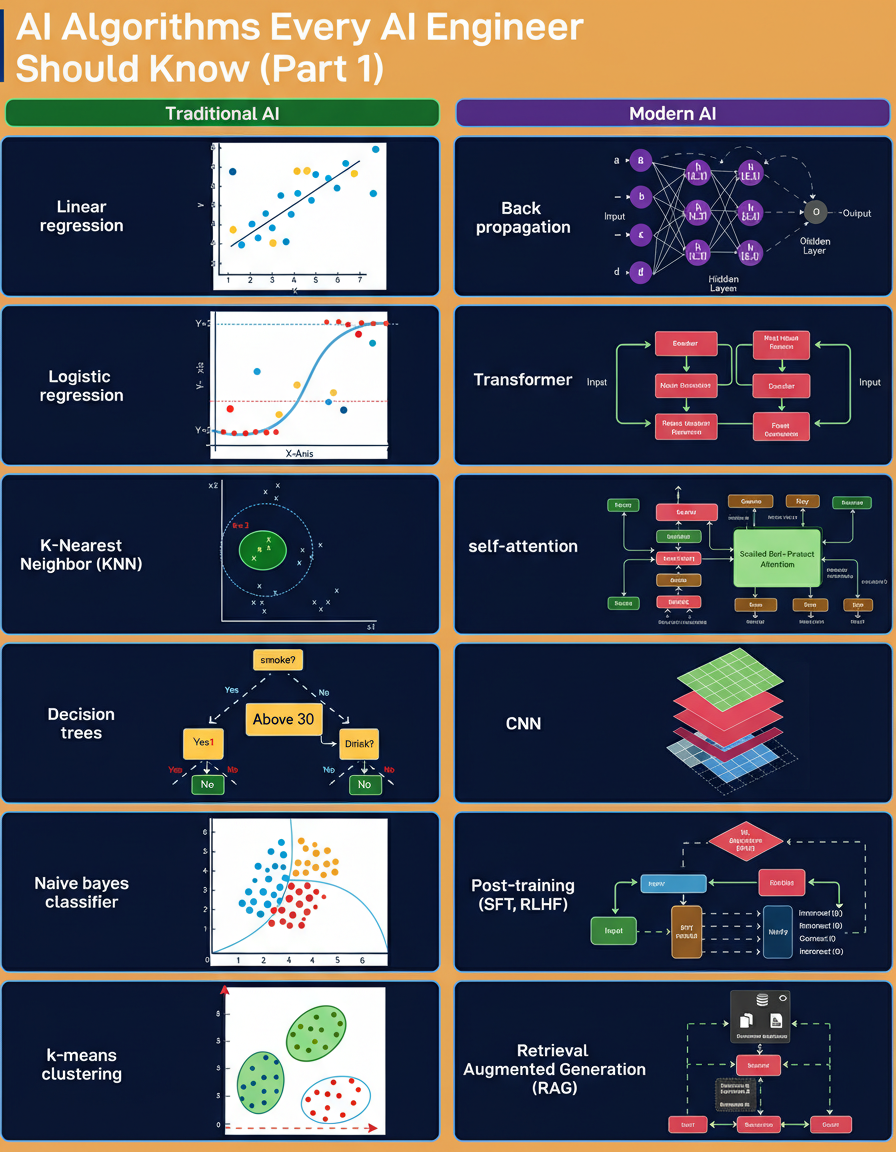

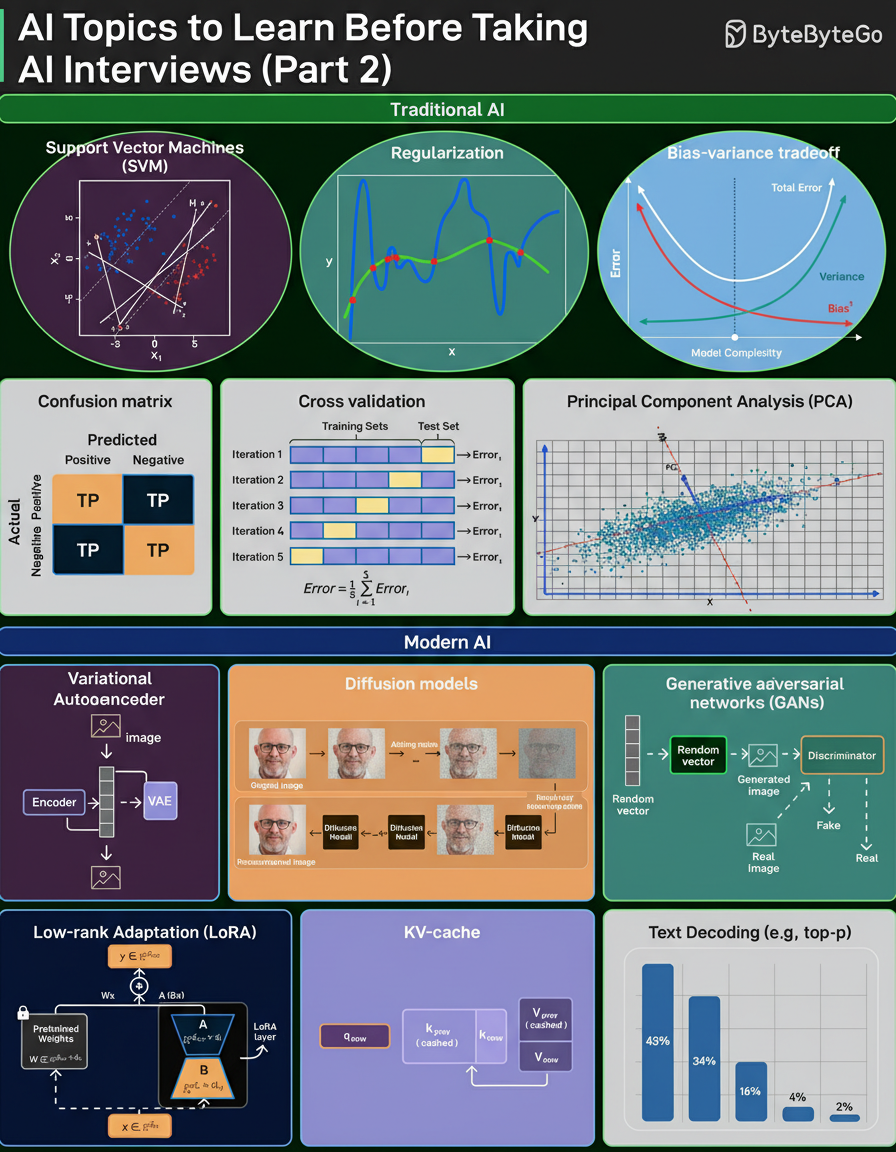

Interviews focused on artificial intelligence emphasize fundamental concepts rather than specific tools. These fundamentals are commonly divided into two categories: Traditional AI and Modern AI.

Traditional AI covers core machine learning topics predominantly established before neural networks gained prominence. In contrast, Modern AI centers on neural network principles and newer developments such as transformers, retrieval-augmented generation (RAG), and post-training techniques.

Interviewers typically expect candidates to have a comprehensive understanding of both categories, including the mechanisms, failure modes, and inherent trade-offs. Preparing this checklist thoroughly ensures readiness for AI/ML interview assessments.

Reflection on which subjects pose the greatest explanation challenges under interview pressure and suggestions of additional relevant topics might aid further preparation.

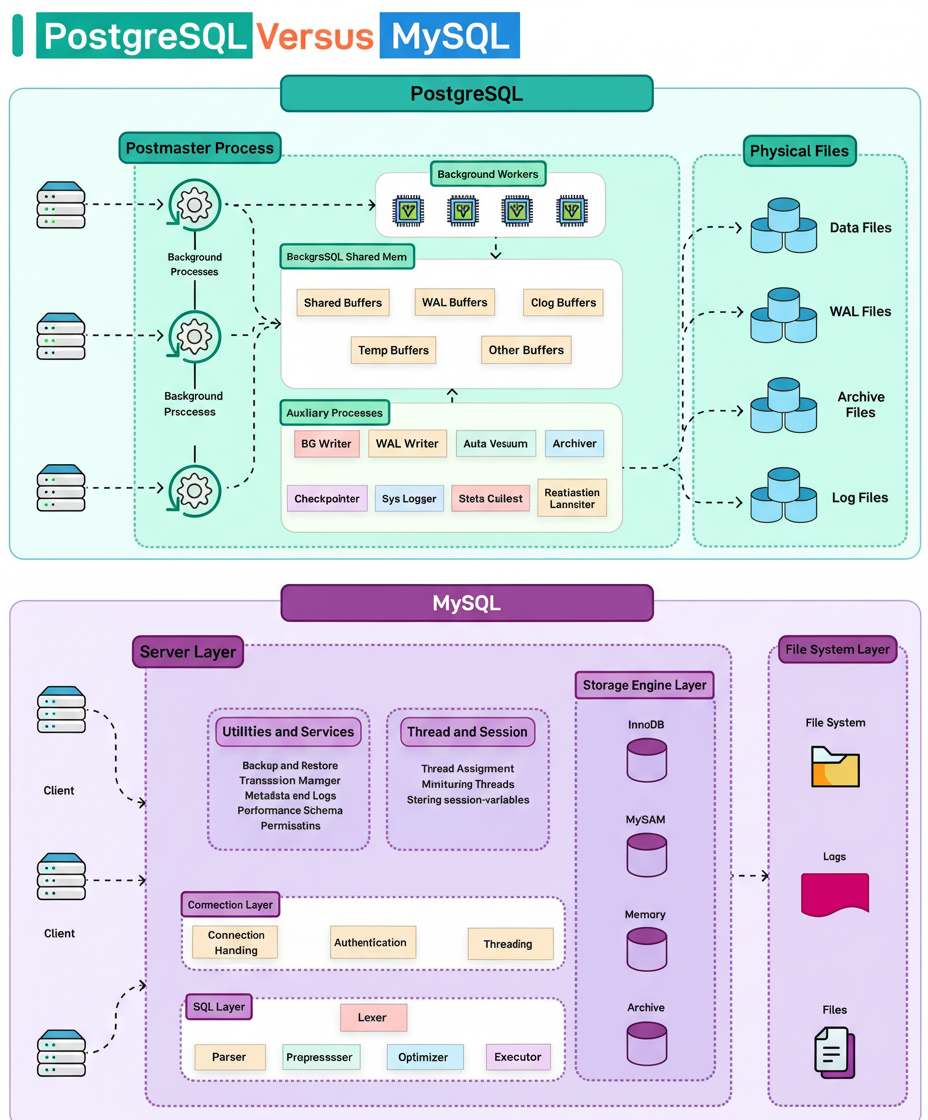

PostgreSQL, implemented in the C programming language, employs a process-based architecture that can be visualized as a factory where a Postmaster manages specialized worker processes. Each connection runs in its own process sharing a common memory area. Background workers independently manage operations such as data writing, vacuuming, and logging.

In contrast, MySQL utilizes a thread-based design akin to a single multitasking brain. It incorporates a layered structure where a central server manages multiple client connections through threads. Pluggable storage engines, including InnoDB and MyISAM, allow customization based on specific application requirements.

An assessment of database preferences often involves weighing these architectural differences.

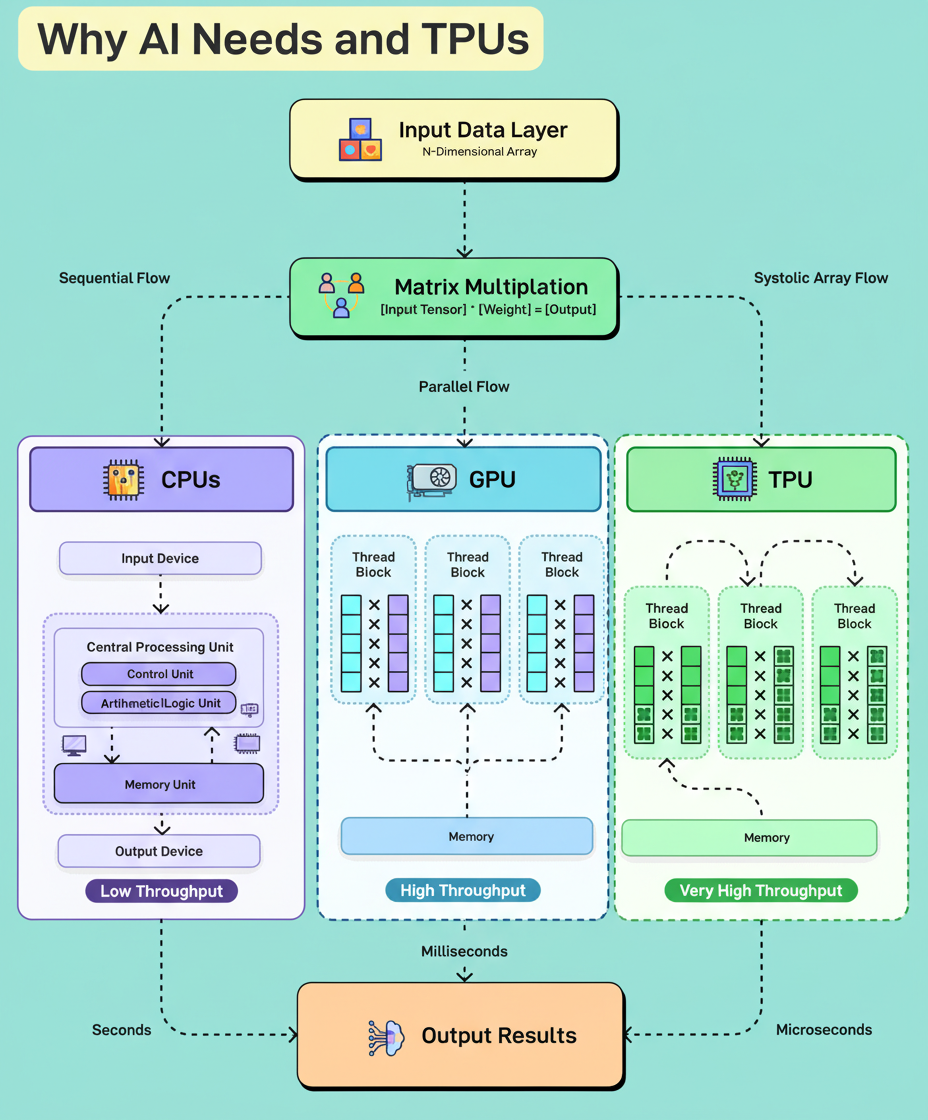

AI workloads involve extensive multiplication of large numeric arrays, necessitating billions of simultaneous calculations. While CPUs perform these tasks sequentially, requiring frequent data transfer between processor and memory, this process is inefficient for large-scale computations.

GPUs enhance performance by enabling parallel processing across hundreds of cores, significantly reducing computation time. Furthermore, TPUs (Tensor Processing Units) utilize systolic array architectures that multiply stored weights by incoming data while accumulating sums vertically and passing results sequentially. This design minimizes input/output overhead and accelerates processing beyond CPU and GPU capabilities.

Additional explanations and clarifications on the necessity of GPUs and TPUs for AI workloads would deepen understanding of this subject.

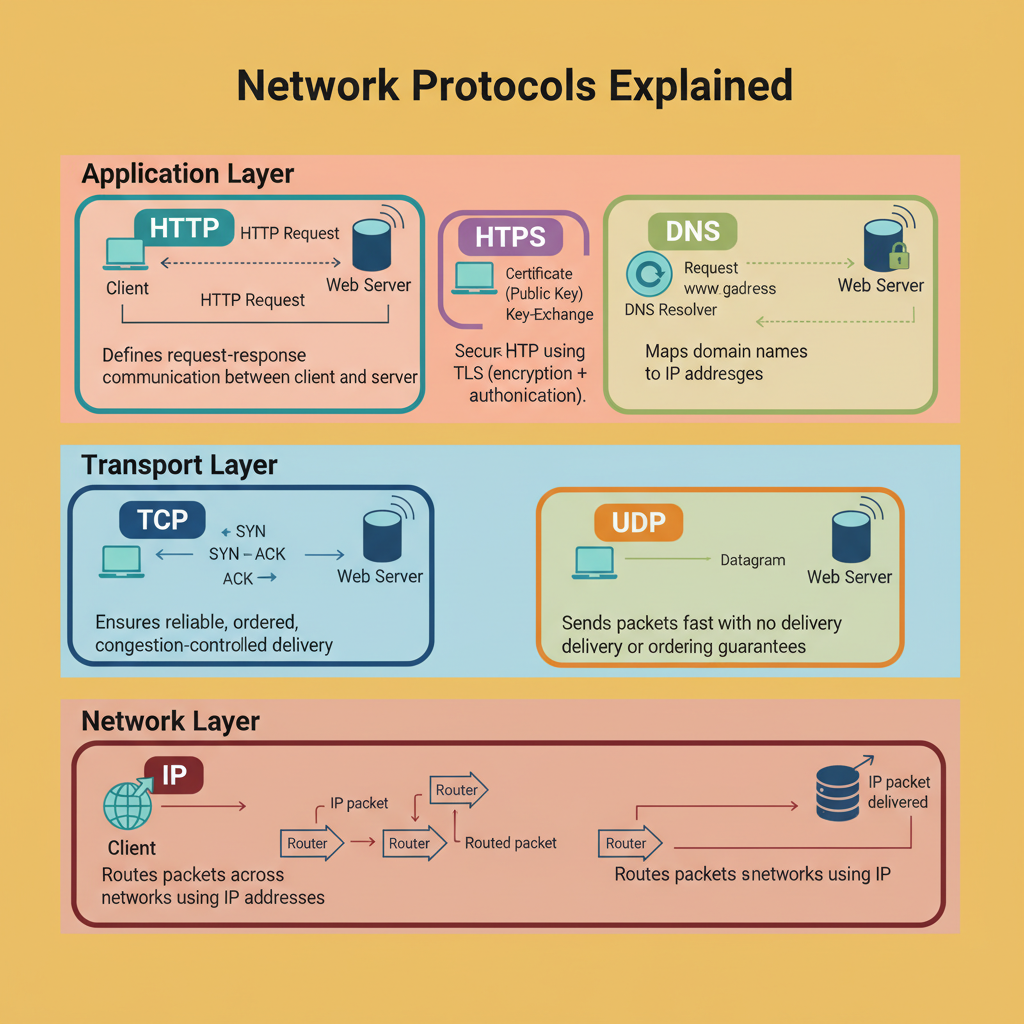

When a URL is entered into a browser, multiple network protocols operate in tandem to facilitate communication. Commonly recognized protocols like HTTP and HTTPS represent only the uppermost layers in this hierarchical protocol stack, each formed to address specific network challenges.

At the uppermost layer, HTTP establishes a stateless request-response communication model used by browsers, APIs, and microservices. HTTPS secures this communication by incorporating TLS for encryption and server authentication.

DNS, preceding these protocols, translates human-readable domain names into machine-routable IP addresses, enabling packet routing.

The transport layer includes TCP, which ensures reliable connection-oriented communication employing mechanisms like the three-way handshake and retransmission of lost packets, and UDP, which offers connectionless, fast datagram transmission suited for real-time applications like streaming and gaming. Protocols such as QUIC also utilize UDP for improved performance.

At the network layer, IP functions as the internet’s postal system, routing packets through intermediate devices without guaranteeing delivery order or reliability.

Each layer operates independently within defined scopes, allowing for the internet’s scalability and robustness. Failures during communication typically lead to initial fault attribution to DNS, TCP, or the application layer, depending on the observed symptoms.