The AI open-source ecosystem is experiencing unprecedented expansion. GitHub’s Octoverse 2025 report indicates over 4.3 million AI-related repositories exist on the platform, representing a 178% year-over-year increase in large language model (LLM)-focused initiatives. Within this thriving environment, specific repositories stand out for their considerable impact, each acquiring tens to hundreds of thousands of stars by equipping developers with tools to create autonomous agents, deploy local models, and enhance AI-driven workflows.

OpenClaw represents the breakout success of 2026 and is potentially the fastest-growing open-source project in GitHub’s history. Developed by PSPDFKit founder Peter Steinberger, it rapidly escalated in popularity from 9,000 to over 60,000 stars within days of going viral in late January 2026, surpassing 210,000 stars since. Initially named Clawdbot before briefly becoming Moltbot, the project ultimately adopted the name OpenClaw.

Fundamentally, OpenClaw functions as a personal AI assistant operating exclusively on user devices. It acts as a local gateway connecting AI models to more than 50 integrations, such as WhatsApp, Telegram, Slack, Discord, Signal, and iMessage. Unlike cloud-based alternatives, data remains strictly on the user’s machine. The assistant continuously operates, capable of web browsing, form completion, shell command execution, code writing and running, and smart home device control. A distinctive feature is its ability to autonomously develop new skills, thereby expanding its functionalities without user intervention.

OpenClaw has been employed for automating developer workflows, managing personal productivity, web scraping, browser automation, and proactive scheduling. On February 14, 2026, Steinberger announced his transition to OpenAI, with OpenClaw moving under an open-source foundation. Security experts have highlighted risks related to the extensive permissions needed for the agent and the absence of rigorous vetting for harmful skill uploads, advising caution during setup.

n8n is an open-source workflow automation platform that blends a visual, no-code interface with robust custom code capabilities, now enhanced by native AI functions. Supporting over 400 integrations and available under a self-hosted, fair-code license, n8n enables technical teams to maintain comprehensive control over automation pipelines and data.

Its AI-native design allows direct incorporation of large language models into workflows through LangChain integration. Users can create custom AI agent automations alongside conventional API calls, data transformation, and conditional logic. This approach bridges traditional business automation with advanced AI agent workflows. Organizations with stringent data governance benefit significantly from the self-hosting option.

Typical applications include AI-driven email triage, automated content pipelines, customer support workflows, data enrichment, and multi-step AI processing chains.

Contrary to the prevailing cloud API subscription model, Ollama offers a lightweight Go-based framework designed to run and manage large language models entirely on local hardware with no external data transmission, fully supporting offline use.

Ollama provides straightforward commands to download, run, and serve various models such as Llama, Mistral, Gemma, and DeepSeek. Desktop applications for macOS and Windows allow users without development experience to utilize local AI capabilities. Strategic partnerships that support open-weight models from leading research labs have driven significant interest.

This project has become a foundational component of the local AI movement, empowering developers to experiment and deploy LLMs in privacy-sensitive or cost-conscious contexts. Ollama integrates well with tools such as Open WebUI to create completely self-hosted alternatives to commercial AI chat solutions.

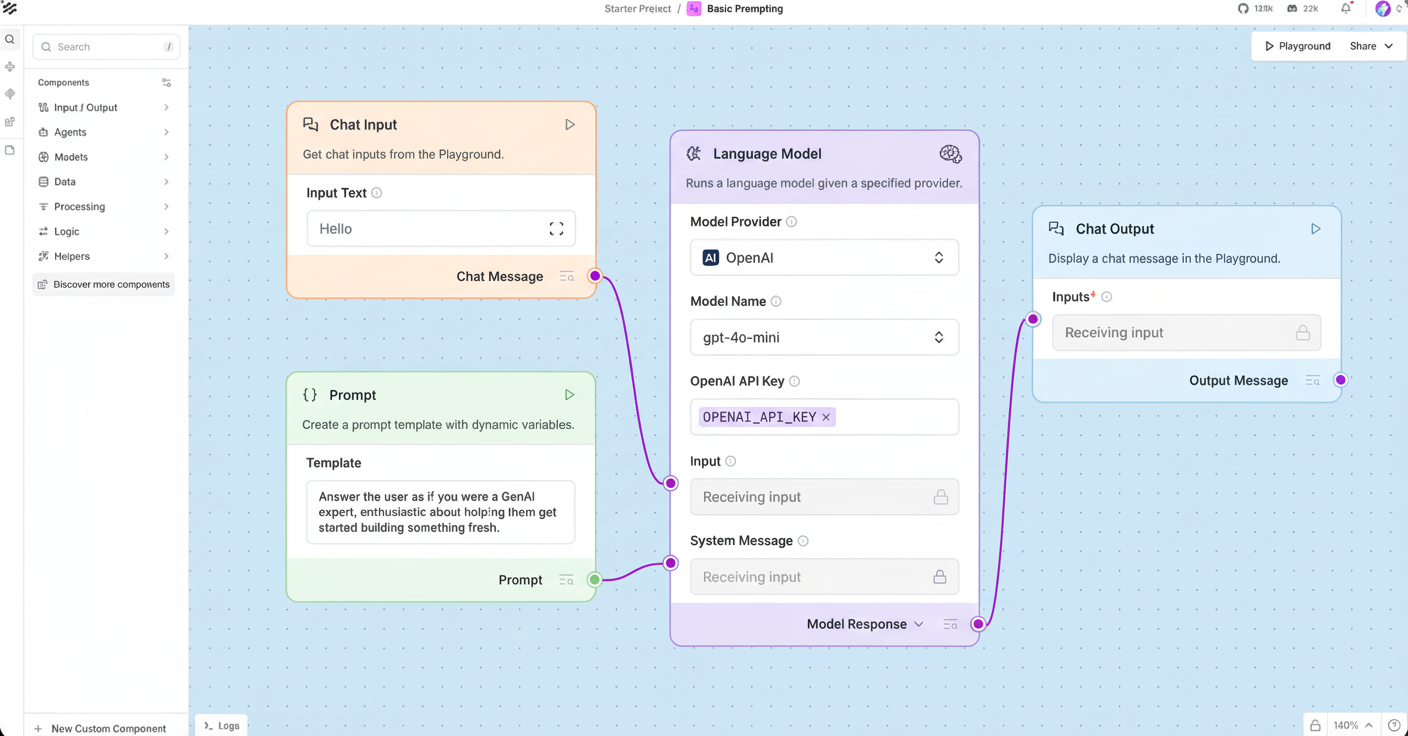

Langflow is a low-code platform engineered for designing and deploying AI-powered agents and retrieval-augmented generation (RAG) workflows, leveraging the LangChain framework. It features a drag-and-drop interface for assembling sequences of prompts, tools, memory elements, and data connectors, supporting all key LLMs and vector databases.

Developers can visually manage multi-agent conversations, memory systems, and retrieval layers, deploying these workflows as APIs or standalone apps without extensive backend development. Tasks that previously required weeks of coding can often be constructed within hours.

Langflow has attracted a vibrant community of data scientists and engineers. Common use cases encompass RAG pipeline prototyping, multi-agent dialogue design, custom chatbot development, and rapid LLM application assembly.

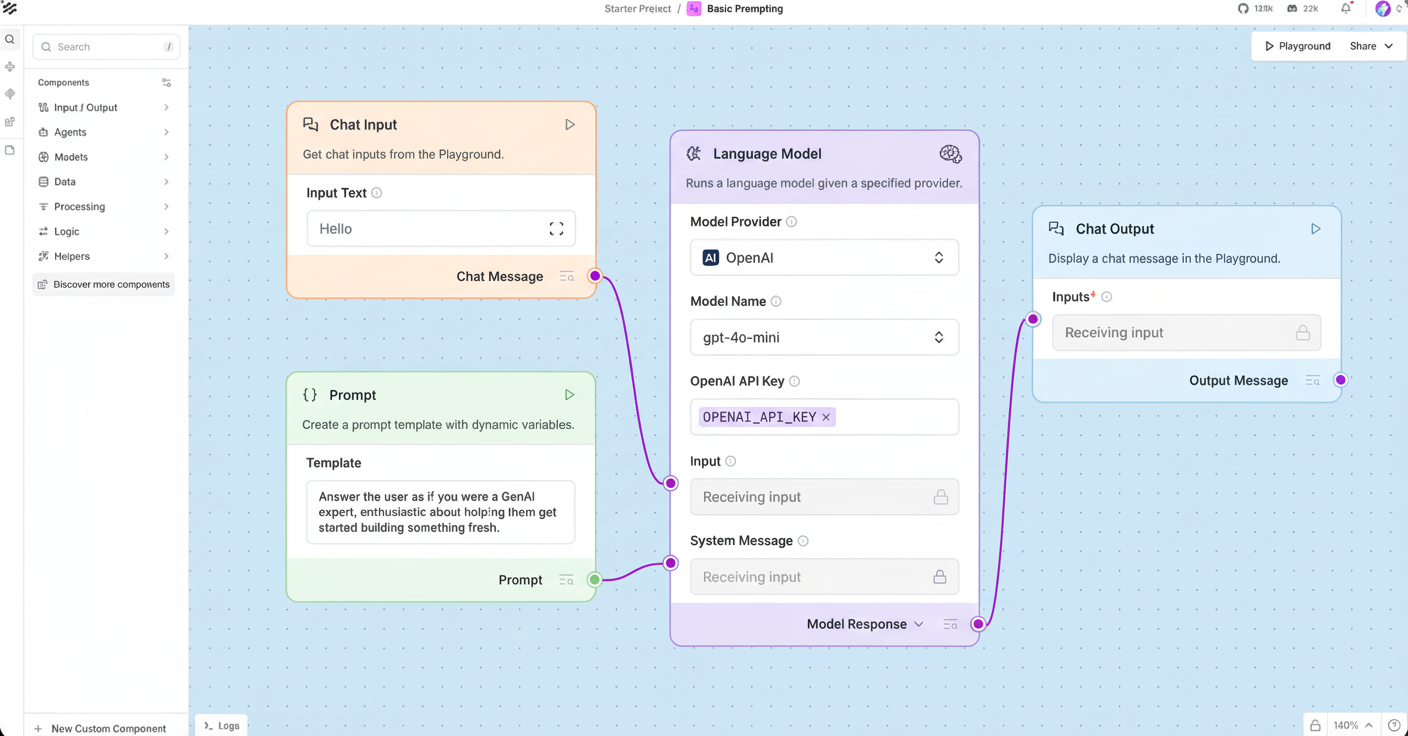

Dify serves as a production-grade platform for developing agentic workflows, providing an integrated toolchain to build, deploy, and manage AI applications. Primarily developed in TypeScript, it supports a range of AI implementations from enterprise QA bots to custom assistants driven by AI.

The platform includes a workflow builder for creating tool-utilizing agents, built-in RAG pipeline handling, compatibility with multiple AI model providers such as OpenAI, Anthropic, and open-source LLMs, as well as usage monitoring and both local and cloud deployment options. It also incorporates Model Context Protocol (MCP) integration. Dify abstracts infrastructure complexities, allowing teams to concentrate on agent logic development.

Dify addresses the needs of teams seeking rapid deployment of AI-powered services through an open-source, self-hosted framework. Its applications span enterprise chatbots, AI internal tools, customer support automation, and orchestrating multiple models.

LangChain has established itself as the essential framework for constructing robust AI agents in Python. It offers modular components supporting chains, agents, memory, retrieval, tool usage, and multi-agent orchestration. The related LangGraph project extends capabilities to complex, stateful workflows including loops and conditional paths.

Many projects listed here integrate directly with LangChain or build upon it, making it the connective core of the AI agent ecosystem. It enjoys strong backing from Anthropic, OpenAI, Google, and all major model providers.

Developers rely on LangChain for multi-agent systems, tool-enabled AI agents, RAG pipelines, conversational AI, and structured data extraction solutions.

Open WebUI is a self-hosted AI platform designed for offline operation, boasting more than 282 million downloads and over 124,000 stars. It delivers a refined ChatGPT-style web interface that connects to Ollama, OpenAI-compatible APIs, and various LLM runners, all installable via a single pip command.

Its extensive features include a built-in RAG inference engine, hands-free voice and video calls with multiple speech-to-text and text-to-speech providers, a model builder for custom agents, native Python function calls, and persistent artifact storage. Enterprises benefit from SSO, role-based access control, and audit logging. A community marketplace offers prompts, tools, and functions for easy platform extension.

Where Ollama powers local models, Open WebUI supplies the user interface. Together, these tools form a widely adopted self-hosted AI stack with primary use cases in private ChatGPT alternatives, multi-model comparisons, collaborative AI platforms, and RAG-supported document question answering.

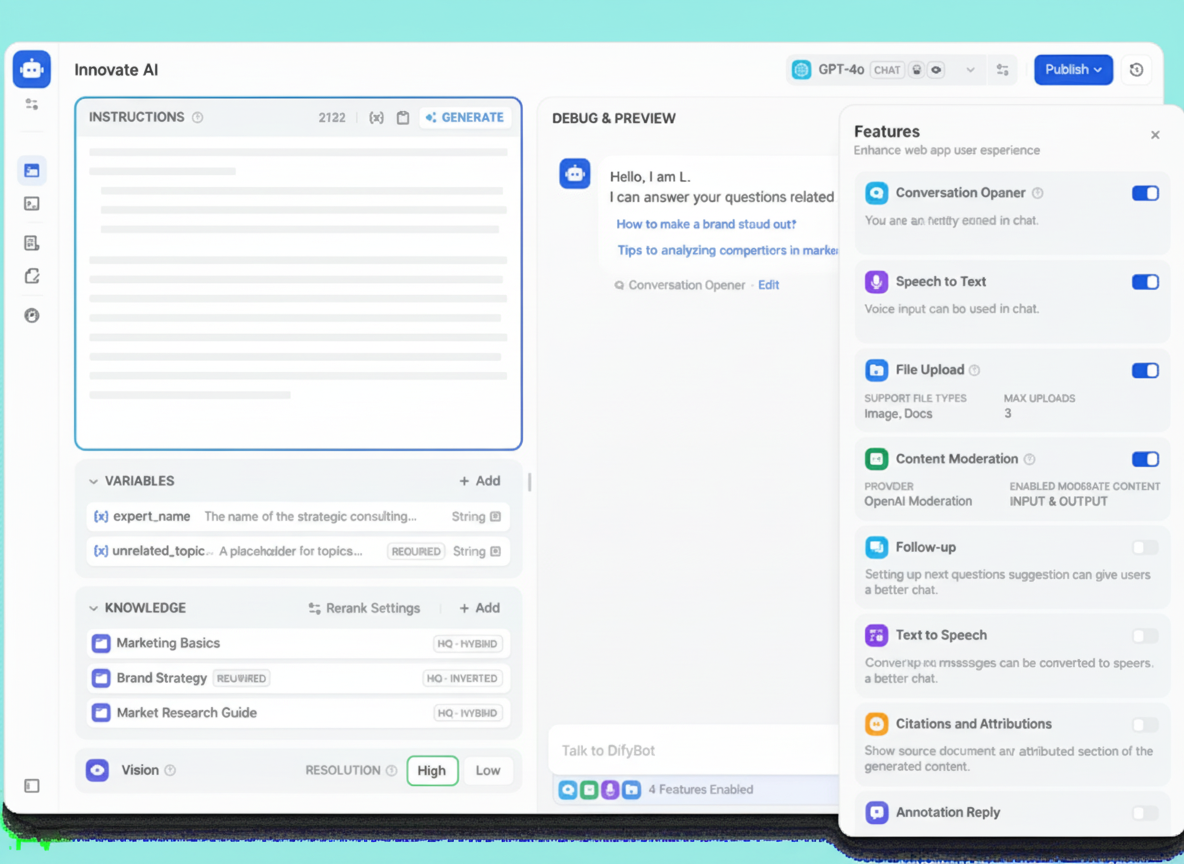

DeepSeek-V3 has impressed the AI community by delivering benchmark levels comparable to top proprietary models like GPT-4, offering an entirely open-weight release.

Built with a Mixture-of-Experts (MoE) architecture, it optimizes general-purpose reasoning and supports ultra-long 128K token contexts. Innovations such as distilled reasoning chains have set new standards within the open model community.

DeepSeek-V3 demonstrates that the open-source community can achieve model performance on par with the best proprietary options. This development is significant for developers seeking high-performance AI without vendor lock-in, recurring API fees, or privacy compromises. The model is freely available for commercial use and can be fine-tuned for specific domains. It operates locally via Ollama and is frequently chosen for powering custom AI agents and enterprise chatbots.

The Google Gemini CLI represents Google’s open-source entry into the agentic coding domain by integrating the Gemini multimodal model directly into the developer terminal environment.

By using a simple npx command, developers can interact with, command, and automate tasks using the Gemini model from the command line. Features include code assistance, natural language queries, integration with Google Cloud services, and embedding within scripts and CI/CD pipelines.

This tool abstracts the complexity of API usage, granting immediate access to frontier AI capabilities from any terminal. Popular applications consist of AI-assisted programming, command-line task automation, batch file processing, and rapid prototyping.

RAGFlow is an open-source retrieval-augmented generation engine that merges sophisticated RAG techniques with autonomous agent functionalities to establish a reliable contextual layer for LLMs.

It offers a comprehensive framework including document ingestion, vector indexing, query planning, and tool-enabled agents capable of invoking external APIs beyond basic text retrieval. Additional features encompass citation tracking and multi-step reasoning, essential for enterprise environments demanding answer traceability.

As AI systems progress beyond simple chatbot models toward production-ready deployments, RAGFlow confronts the core challenge of producing grounded, traceable, and dependable AI responses. With over 70,000 stars, it has become a foundational component in enterprise knowledge bases, compliance-driven AI solutions, research assistance, and multifaceted data analysis workflows.

Claude Code is Anthropic’s terminal-based coding agent. After installation, it comprehends the full codebase context and executes developer instructions phrased in natural language. It can refactor functions, explain files, generate unit tests, manage git operations, and perform complex multi-file modifications through conversational interaction.

Distinct from simpler code completion tools, Claude Code reasons about entire projects, performs multi-step procedures, and maintains context across extended programming sessions. It functions as an AI pair programmer with an in-depth understanding of the project structure capable of autonomous code action.

Typical uses include comprehensive codebase refactoring, automated test creation, coding review and explanation, and automation of git workflows.

Several prevailing trends characterize this evolving AI open-source ecosystem:

Projects such as Ollama, Open WebUI, and OpenClaw collectively signify a major shift toward running AI locally on individual hardware. Privacy concerns, API cost reduction, and the pursuit of extensive customization are primary motivators driving this local AI trend. The self-hosted AI infrastructure has advanced to the point where launching a comprehensive AI platform can be accomplished with a single command.

Nearly all prominent repositories now integrate autonomous agent functionalities, reflecting the transition from reactive AI to proactive agent AI. These agents can navigate the web, execute scripts, manage files, orchestrate multi-stage workflows, and even enhance their own abilities autonomously while running continuously on local systems.

The success of DeepSeek-V3 illustrates that open-weight models can rival leading proprietary models. When combined with efficient local runtimes like Ollama, developers can build advanced AI applications free from API dependencies, fundamentally transforming AI development economics.

Platforms such as Langflow, Dify, and n8n highlight that drag-and-drop visual interfaces are becoming preferred for designing AI agent pipelines. This democratizes AI development, enabling domain experts without machine learning expertise to build sophisticated AI applications, significantly lowering the barrier for production AI deployment.

The repositories covered here transcend trends; they form the foundational blocks of the emerging AI infrastructure stack. Developers engaged in building AI-driven products, automating workflows, or experimenting with frontier models will find these tools to be the most proven and community-endorsed starting points. Continued innovation in this sector is relentless and should remain on the radar of all AI technology stakeholders.